Developers will be able to train neural networks on their own computers with the next PyTorch release, which will support Apple GPUs.

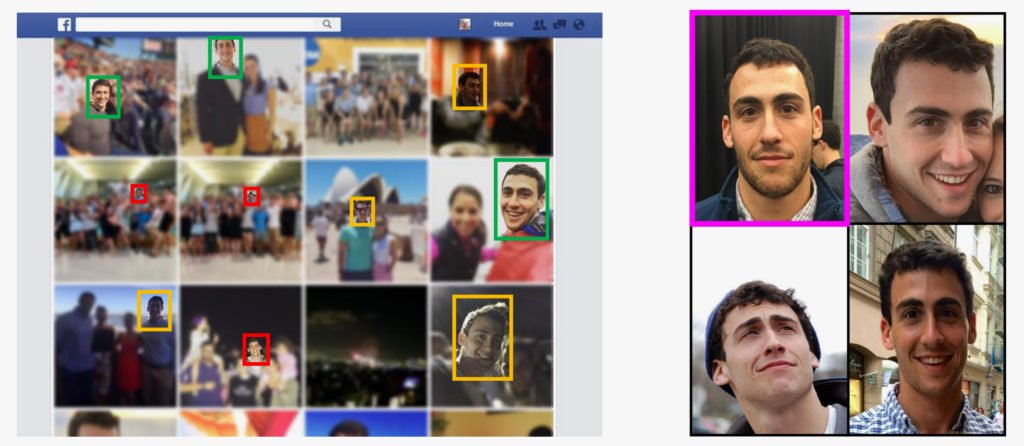

According to a new investigation, criminals can simply steal someone else’s identity by fooling live face recognition software with deepfakes.

Sensity AI, an identity fraud-fighting business, set conducted a series of spoof attacks.

Engineers scanned an individual’s image from an ID card and mapped it onto the face of another person.

Sensity then attempted to hack live face recognition systems by convincing them that the fictitious assailant was a genuine person.

So-called “liveness tests” use photos or video streams from cameras to validate identities in real time, such as face recognition for unlocking mobile phones.

Sensity’s live deepfake assaults failed nine out of 10 vendors.

The companies vulnerable to deepfake attacks were not named by Sensity.

“We told them, ‘Look, you’re exposed to this kind of attack,’ and they answered, ‘We don’t care,” Sensity’s chief operating officer Francesco Cavalli told The Verge.

“We chose to share it because we believe that the public, both at a corporate level and in general, should be aware of these concerns.”

Liveness tests are dangerous, especially if they are used for automatic biometric authentication by banks or the IRS in the United States.

However, these attacks aren’t always simple to execute out.

In its research, Sensity said that hijacking mobile cameras and injecting pre-made deepfake models required a specific phone.

Soon, PyTorch developers will be able to train AI models on their Apple laptops.

Apple’s newer machines have custom-made GPUs, but PyTorch developers haven’t been able to take advantage of the hardware’s capabilities while training machine learning models.

With the upcoming PyTorch v1.12 release, that will change.

“We are delighted to introduce support for GPU-accelerated PyTorch training on Mac in conjunction with Apple’s Metal engineering team,” the PyTorch community wrote in a blog post this week.

“Until now, PyTorch training on the Mac relied solely on the CPU, but with PyTorch v1.12, developers and researchers will be able to take advantage of Apple silicon GPUs for substantially faster model training.”

With the new release, Mac users will be able to train neural networks on their own computers rather than renting computational resources from cloud computing services.

A representative told The Register that PyTorch v1.12 will be released “sometime in the second half of June.”

Apple’s GPUs are better at training machine learning models than its CPUs, allowing for faster training of larger models.

Medical models with fictitious data

Anthem, a US health insurer, is collaborating with Google Cloud to develop a synthetic data pipeline for machine learning models.

The Chocolate Factory will manufacture up to two petabytes of phoney data, which will resemble medical records and healthcare claims.

These artificial datasets will be used to train AI systems that can better detect fraud and are less risky than gathering real data from patients.

The models will eventually evaluate real data and may, for example, automatically review people’s health records to hunt for bogus claims.

Anil Bhatt, Anthem’s chief information officer, told the Wall Street Journal that “more and more… synthetic data is going to overtake and be the way people perform AI in the future.”

Using phoney data eliminates privacy concerns while also potentially reducing prejudice.

Experts have informed The Register that these manufactured examples don’t always work in every machine learning application.

“In our opinion, synthetic data models will ultimately power the promise of what big data can bring,” said Chris Sakalosky, managing director, Google Cloud, US Healthcare & Life Science.

“We believe that is what will propel this industry ahead.”

Former Apple AI director joins DeepMind

DeepMind has hired a former Apple director of machine learning who reportedly resigned over the company’s return-to-work policy.

Ian Goodfellow oversaw the iGiant’s “Special Projects Group,” which worked on the company’s self-driving car software.

He reportedly quit after Apple invited employees to return to the workplace three days a week beginning May 23.

Due to an increase in COVID instances, the policy has been postponed.

According to Bloomberg, he will then join DeepMind.

Interestingly, Goodfellow will apparently work for the UK-based research facility as a “individual contributor.”

He is well known for inventing generative adversarial networks, a form of neural network commonly used to generate AI-generated graphics, and for co-authoring the renowned Deep Learning textbook from 2015.

Goodfellow worked as an AI researcher at Google and OpenAI for almost three years before joining Apple as a director.